I listen to hours of podcasts every week. Most of it disappears from memory. So I built a system that turns passive listening into a permanent, searchable knowledge base - without ever taking a single note.

The Disappearing Knowledge Problem

Here's what used to happen. I'd listen to a great podcast episode on a walk. Someone would share a framework for thinking about relationships, or break down how a CEO approaches capital allocation, or explain why a particular geopolitical event matters more than people think. I'd be nodding along, thinking "I should remember this."

I never did.

Within a week, I'd forget the specifics. Within a month, I'd forget I'd listened to the episode at all. Somewhere in my listening history were hundreds of hours of insights from some of the smartest people in the world - and I couldn't access any of it.

The obvious solution is to take notes. But I hate taking notes while listening. It breaks the flow. I listen while walking, driving, cooking. I even built Thoughtly, a voice-first app for capturing thoughts on the go. It's great for quick ideas, but it still requires me to pause, think about what to capture, and speak it. For a two-hour podcast with dozens of insights, that's just not realistic.

So I asked a different question: what if I didn't have to take notes at all? What if the system could figure out what I listened to, get the transcript, extract the insights, and file them away - all automatically?

How It Works

The system connects four tools I already use: a podcast app, a read-later service, a note-taking app, and an AI assistant. No new apps, no new subscriptions.

Every night at 11pm, a script syncs my listening history and sends finished episodes to be transcribed. By 8am, the transcripts are in my Obsidian vault. When I'm ready, a single command tells the AI to read every transcript, extract insights, and update my knowledge base.

I don't take a single note. I just listen.

What It Captures

Every morning, a daily log appears in my Obsidian vault. It knows what I listened to, how long I spent, and whether I finished each episode:

It's smart about what counts as "finished" and avoids duplicates across days. I didn't have to configure any of this.

I Get a Push Notification Every Morning

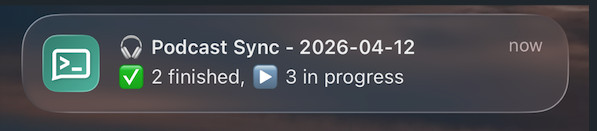

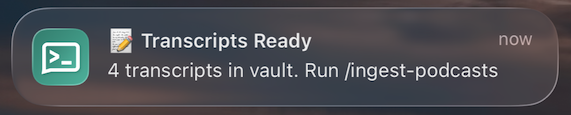

The system sends a push notification to my phone after each run. The nightly sync tells me how many episodes were logged. The morning run tells me when transcripts are ready to ingest.

Tapping the sync notification opens my Obsidian Podcast Log directly. No friction.

From Transcript to Insight

This part was inspired by Andrej Karpathy's idea of an "LLM wiki" - a knowledge base that an AI maintains and grows over time, rather than you manually organizing everything. Instead of a static set of notes, you get a living wiki that updates itself as new information comes in.

The AI reads the full transcript and doesn't just summarize. It extracts structured insights and files them into an evolving knowledge base, connecting new ideas to things I've already learned.

Here's a real example. I listened to a two-hour conversation with an entrepreneur about life, money, and relationships. The AI identified and extracted insights across five completely different topics:

On relationships

"The worst relationship is when you put the pressure of being happy on your partner. The best relationship is when you are happy and you share that happiness." - This got filed alongside insights from other episodes about marriage, attachment theory, and the 5 love languages. Connections I never would have made manually.

On Gen Z and patience

"Your generation is the on-demand generation. You extend the same expectation to impact, to love, to growth." - A new topic page was created, since nothing in my knowledge base covered generational differences before.

On investing

A specific investment thesis and portfolio allocation strategy - this got added to my existing investing page, alongside notes from financial podcasts I'd listened to months earlier.

One episode. Five topics identified. Three existing knowledge pages updated. Two new pages created. All without me writing a word.

The Knowledge Compounds

The most surprising thing about this system is how knowledge compounds across episodes.

When a CEO talks about AI infrastructure spending in one episode, and a different podcast covers the memory chip shortage, and a third discusses how it affects cloud pricing - the AI connects all three. My "AI Industry" page synthesizes insights from interviews with tech CEOs, venture capital discussions, and geopolitical analysis. I never could have done this manually because I wouldn't remember that specific detail from three weeks ago.

I can now ask: "What have I learned about investing from all my podcasts?" and get an answer that pulls from a dozen different episodes across months of listening. Or "What frameworks have I heard for thinking about relationships?" and get a synthesis of everything from clinical psychology episodes to entrepreneurial wisdom.

The goal isn't to remember every podcast. It's to build a knowledge base that grows smarter the more I listen.

What I Got Wrong at First

I tried to take notes. For years, I tried different approaches to capturing podcast insights - voice memos, quick notes apps, even pausing to type. None of them stuck because they all interrupt the listening experience. The breakthrough was realizing I shouldn't be in the loop at all.

I tried predefined categories. My first version had a fixed list of topics: "Finance," "Career," "Relationships." But I listen to everything from geopolitics to parenting to crypto to food. The better approach: let the AI identify topics from the actual content and grow the knowledge base organically. A podcast about oil markets creates a geopolitics page. One about habits updates the self-improvement page. The taxonomy emerges from what I actually listen to.

I summarized too quickly. The AI initially only read the first chunk of each transcript and missed half the insights. A two-hour conversation might start with investing and end with a profound take on relationships. Now it reads everything before extracting anything.

The Setup

If you want to build something similar, here's the shape of it:

- A podcast app that exports your listening data (I use Overcast, which has an OPML export)

- A transcription service that can process podcast episodes (I use Readwise Reader, which transcribes automatically)

- A note-taking app that stores plain text files you can access programmatically (I use Obsidian)

- An AI assistant that can read and write to your vault (I use Claude)

- A push notification service to know when syncs complete (I use ntfy, which is free and requires no account)

- A computer that's always on to run the nightly sync (a Mac Mini in my case)

It's a single Python file with one dependency. Total running cost: $0/month (everything runs locally, and I was already paying for Readwise).

What's Next

Podcasts are just one input. I'm building a broader personal knowledge system that also ingests article highlights, book notes, and meeting notes. The same pattern applies: capture automatically, let AI extract and connect, build a knowledge base that grows with me.

The end goal is simple: a single place where I can ask a question about anything I've read, heard, or thought - and get an answer grounded in my own experience, not the internet.

Built with Claude Code. The entire pipeline - script, automation, knowledge base integration, and this post - was built in a single conversation.